Improving Transactional Emails

An idea for Indie Developers and the BuildInPublic community.

Once upon a time, I wrote a custom email sending feature for transactional emails. The growth hacker inside of me couldn’t leave it at that though.

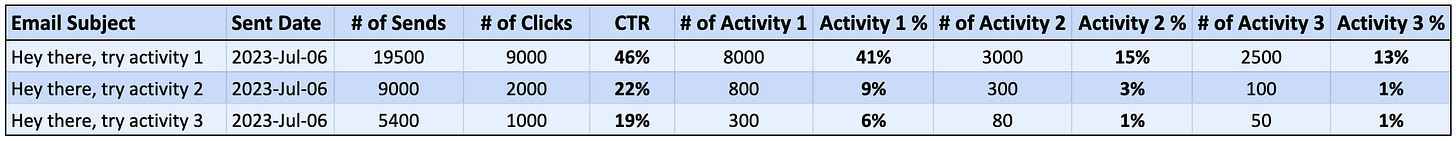

I wanted to know the effectiveness of those emails. So I built a system that would output a table like so:

If you look at the table, it has the email subject, the number of sends, and the number of times the people who were sent the transactional email actually did an action. I would put in a few actions to track. Usually to check if they did the action that was asked of them in the email, then whether they did any further or follow up actions.

What most people might look at here are the number of opens. Which can be hard to track and not always that reliable. You might even go a bit further and look at the number of clicks on a link - which also isn’t that great a measure of the effectiveness of your campaign.

The best part of this though, is that the way I built it was in the same MySQL database as our app data. So we had tables/logs of, let’s say, when a user had completed an action. So I was able to take the list of user_ids that we had sent an email to, and match it with whether or not they had completed an activity. This was much much more powerful

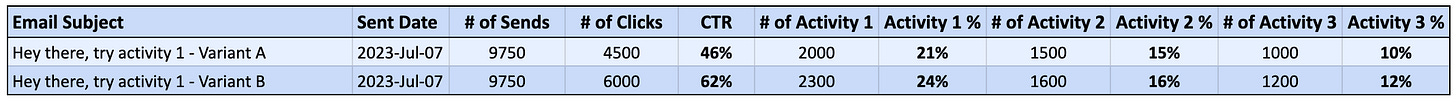

Needless to say, this was great. But how could I make this better? By 1. AB testing and 2. Segmenting.

AB testing was the easiest to start with. So what I did was something that would give me an output like this:

From here, I could see which transactional email led to more completions of an actual activity, and other activities that I wanted to track. Great. From this I could try out different messages and different designs of the email (note: plain text emails, in my experience, always seem to do better).

Here’s how my entire system worked:

I made the email templates using a third party tool. I copied the html from them and stored the raw html in my own database.

I made a MySQL database with a few tables, those were:

One table to keep the templates in. (Used my own version of mail merge fields, denoted by curly braces - e.g. {{first_name}}

One table to queue the emails. (So a table with the actual emails ready to be sent)

One table to track the emails that were sent. This is the table that was queried later on to make the report output.

A cron job that does something like a mail merge. It takes all the accounts that will receive the email that particular day, split them down the middle for which to give the A and the B variants (Or however you want to do it. Maybe only 20% will go into the test. It’s up to you.), then create the “emails to send” in the queue table. Another cron job took care of sending the emails. I preferred to use something like SendGrid but you can choose your own and there might be better tools available for sending transactional emails these days.

I’m thinking that this kind of system would be easy now, if you hooked up your data to a powerful tool like Mixpanel or the likes. If anyone knows a much easier way to achieve what I did here, I’d be happy to learn.

So here’s an idea for the indie hacker/developer/buildinpublic community.

What if we take this a bit further and add some AI to the AB testing process. You could call it a service or SaaS that does:

“AI driven transactional email AB testing for user retention outcomes”

As in the title, here’s my idea:

Use the same mechanism as above.

Use AI or GPT to generate variants of the emails.

Use a simple script to determine which variant worked the best, in terms of activities completed after the email.

Rinse and repeat, by putting the winning email back into AI/GPT to create a variant of it.

If you want to go further, you can use AI to cluster segments of users together then do AB testing of emails on specific clusters/personas/use-cases. That would be quite powerful.

What do you think? Would this work? Is there something out there that can already do this? Chat with me at https://twitter.com/growth_hc/status/1676910727427428352 to debate and discuss.